MCP (Model Context Protocol) Explained — The New Standard for AI Tool Integration in 2026

MCP (Model Context Protocol) Explained — The New Standard for AI Tool Integration in 2026

Every AI coding assistant, every agentic workflow, every LLM-powered automation — they all hit the same wall eventually: connecting the model to the outside world.

Before MCP, every integration was custom. If you wanted Claude to query your Postgres database, you wrote a bespoke function. If you wanted GPT-4 to read your Google Drive, you built a separate connector. If you switched models, you rebuilt everything. Every tool needed its own glue code, and none of it was reusable.

Model Context Protocol (MCP) changes this. Introduced by Anthropic in November 2024 and now governed by the Linux Foundation, MCP is an open standard that gives AI systems a universal way to connect to tools, data sources, and services. It has been called the "USB-C for AI" — one protocol that works across models, platforms, and use cases.

By March 2026, MCP reached 97 million monthly SDK downloads across Python and TypeScript, with over 10,000 public MCP servers available. Every major AI provider — Anthropic, OpenAI, Google, Microsoft, and AWS — now supports it. This is not a niche experiment. MCP is the infrastructure layer that agentic AI runs on.

This guide explains what MCP is, how it works under the hood, how to build your first MCP server, and what you need to know about security. Whether you are building AI-powered developer tools, automating enterprise workflows, or just trying to understand what everyone is talking about — this is where to start.

If you are evaluating AI coding tools that use MCP under the hood, see our comparison of Cursor, Windsurf, and GitHub Copilot and our guide to the best AI code review tools.

What Is Model Context Protocol (MCP)?

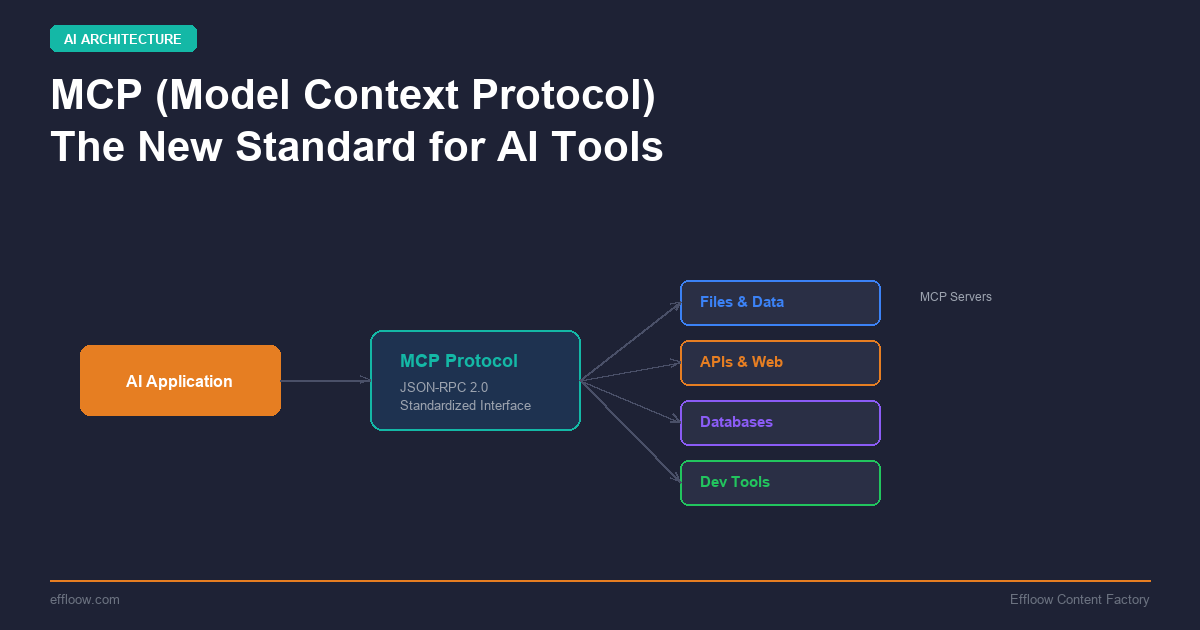

MCP is an open protocol that standardizes how AI applications communicate with external tools and data sources. Think of it as a shared language between an LLM-powered application and the world of APIs, databases, file systems, and services it needs to work with.

Before MCP, the AI ecosystem had an "N × M" problem. If you had N AI models and M tools, you needed N × M custom integrations. Each model provider had its own way of defining tools, passing context, and handling responses. MCP collapses this into an N + M problem: each model implements the MCP client spec once, each tool implements the MCP server spec once, and they all work together.

Key Facts

- Created by: Anthropic (November 2024)

- Governed by: Agentic AI Foundation (AAIF) under the Linux Foundation, co-founded by Anthropic, Block, and OpenAI (December 2025)

- Specification: Open source, currently at version 2025-11-25 with draft updates for 2026

- Transport: JSON-RPC 2.0 over stdio (local) or Streamable HTTP (remote)

- SDKs available: Python, TypeScript (official); community SDKs for Go, Rust, Java, C#, and others

- Adoption: 97 million monthly SDK downloads, 10,000+ public servers (as of March 2026)

The USB-C Analogy

The most common way people explain MCP is the USB-C analogy. Before USB-C, you needed different cables for different devices — Lightning for iPhones, Micro-USB for Android, proprietary connectors for laptops. USB-C standardized the physical connection so any device could connect to any peripheral.

MCP does the same thing for AI. Instead of building a custom connector for every tool-model combination, you build one MCP server for your tool and one MCP client in your AI application. They speak the same protocol, so they work together immediately.

Why MCP Matters

The Problem It Solves

AI assistants are only as useful as the context they can access. An LLM that cannot read your codebase, query your database, or check your calendar is limited to answering questions from its training data. The real value of AI in production comes from connecting it to live systems.

Before MCP, developers solved this with:

- Custom function calling — defining tool schemas inline with every API request to the model

- RAG pipelines — stuffing context into prompts via retrieval

- Plugin systems — proprietary frameworks like ChatGPT Plugins (deprecated) or custom agent toolkits

Each approach worked, but none were portable. Switch models and you rewrite your tools. Share a tool with another team and they adapt it to their stack. Build an agent framework and you are locked into one vendor's approach.

What MCP Enables

Write once, use everywhere. Build an MCP server for your Postgres database and it works with Claude, GPT-4, Gemini, or any MCP-compatible client. No code changes needed.

Ecosystem effects. With a standard protocol, a marketplace of pre-built MCP servers has emerged. Need Slack integration? There is an MCP server for that. GitHub? Postgres? Stripe? Google Drive? All available as drop-in servers. See our guide to the top 15 MCP servers every developer should install for specific recommendations.

Agent composability. MCP makes it straightforward for AI agents to discover and use tools at runtime. An agent can connect to multiple MCP servers simultaneously, each exposing different capabilities. This is the foundation for multi-tool agentic workflows. For a comparison of the frameworks that power these agents, see our AI agent frameworks comparison.

Separation of concerns. Tool developers focus on building great tools. AI application developers focus on building great AI experiences. The protocol handles the wiring between them.

How MCP Works: Architecture Deep Dive

MCP uses a client-server architecture with three distinct roles:

The Three Roles

-

MCP Host — The user-facing AI application (e.g., Claude Desktop, an IDE like Cursor, or your custom agent). The host manages connections and coordinates between the LLM and MCP clients.

-

MCP Client — A protocol-level component inside the host that maintains a 1:1 connection with a specific MCP server. The client handles message framing, capability negotiation, and request routing.

-

MCP Server — A lightweight program that exposes specific capabilities through the MCP protocol. Each server provides tools, resources, or prompts — or any combination of the three.

The Three Primitives

MCP servers expose capabilities through three core primitives:

Tools — Executable functions the AI can invoke. Examples: running a database query, creating a GitHub issue, sending a Slack message. Tools are the "actions" an AI can take in the world.

{

"name": "query_database",

"description": "Execute a read-only SQL query against the production database",

"inputSchema": {

"type": "object",

"properties": {

"sql": { "type": "string", "description": "The SQL query to execute" }

},

"required": ["sql"]

}

}

Resources — Read-only data sources the AI can access for context. Examples: file contents, database schemas, API documentation. Resources provide information without side effects.

Prompts — Reusable templates that structure how the AI interacts with specific tools or resources. Examples: a "code review" prompt template that guides the AI through a structured review process.

Communication Flow

Here is how a typical MCP interaction works:

- The host application starts and connects MCP clients to configured MCP servers.

- During initialization, client and server negotiate capabilities — what tools, resources, and prompts are available.

- When the user asks a question, the host sends it to the LLM along with the list of available MCP tools.

- The LLM decides which tool to call (if any) and returns a structured tool call request.

- The host routes the request through the appropriate MCP client to the correct MCP server.

- The server executes the tool and returns results.

- The host passes results back to the LLM for incorporation into the response.

Transport Options

MCP supports two transport mechanisms:

-

stdio — For local servers. The host launches the server as a subprocess and communicates over stdin/stdout using JSON-RPC 2.0. Fast, simple, no network configuration. Best for IDE integrations and developer tools.

-

Streamable HTTP — For remote servers. Communication happens over HTTP with server-sent events (SSE) for streaming. Supports multiple concurrent clients. Best for shared team tools and production deployments.

Building Your First MCP Server

The fastest way to understand MCP is to build a simple server. Here is a minimal example in both TypeScript and Python.

TypeScript Example

First, set up your project:

mkdir my-mcp-server && cd my-mcp-server

npm init -y

npm install @modelcontextprotocol/sdk zod

Create your server in index.ts:

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

import { z } from "zod";

const server = new McpServer({

name: "word-counter",

version: "1.0.0",

});

// Register a tool

server.tool(

"count_words",

"Count the number of words in a given text",

{

text: z.string().describe("The text to count words in"),

},

async ({ text }) => {

const wordCount = text.trim().split(/\s+/).length;

return {

content: [

{

type: "text",

text: `The text contains ${wordCount} words.`,

},

],

};

}

);

// Start the server

const transport = new StdioServerTransport();

await server.connect(transport);

Python Example

pip install mcp

Create server.py:

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("word-counter")

@mcp.tool()

def count_words(text: str) -> str:

"""Count the number of words in a given text."""

word_count = len(text.split())

return f"The text contains {word_count} words."

if __name__ == "__main__":

mcp.run(transport="stdio")

Connecting to Claude Desktop

Add your server to Claude Desktop's configuration file (claude_desktop_config.json):

{

"mcpServers": {

"word-counter": {

"command": "node",

"args": ["/path/to/my-mcp-server/index.js"]

}

}

}

Restart Claude Desktop and your tool appears in the available tools list. Ask Claude to "count the words in this paragraph" and it will invoke your MCP server.

Testing Your Server

The MCP Inspector is the official debugging tool. Run it against your server to verify tools are registered correctly and test them interactively:

npx @modelcontextprotocol/inspector node index.js

This opens a web interface where you can see your server's capabilities, invoke tools manually, and inspect the JSON-RPC messages flowing between client and server.

Real-World MCP Use Cases

MCP has moved well beyond toy examples. Here are the patterns that are driving real adoption in 2026.

Development Tools

IDEs like Cursor, Windsurf, and Zed use MCP to give AI coding assistants deep project context. Instead of just reading files, an MCP-connected assistant can query your database schema, check CI/CD pipeline status, search your issue tracker, and run tests — all within the same conversation.

For a detailed look at how these AI IDEs compare, including their MCP integration, see our Cursor vs Windsurf vs GitHub Copilot comparison.

Enterprise Integration

MCP servers for Slack, Google Drive, Salesforce, Jira, and other enterprise tools allow AI agents to operate across the organization's toolset. A single agent can read a support ticket from Jira, pull customer data from Salesforce, draft a response, and post it to Slack — all through MCP.

Database Access

Postgres, MySQL, Supabase, and other database MCP servers give AI assistants the ability to query and analyze data directly. This replaces fragile RAG pipelines for structured data with direct, real-time database access.

Code Review and Quality

AI code review tools use MCP to access repository context beyond the immediate diff. They can check test coverage, verify against coding standards, and understand the broader codebase impact of changes.

See our guide to AI code review tools for tools that leverage this kind of deep context access.

Financial and Payment Systems

Stripe's MCP server allows AI agents to handle invoicing, customer management, and refund processing through natural language. This pattern is extending to other payment and financial systems.

Multi-Agent Orchestration

In multi-agent architectures, MCP provides a shared tool layer. Multiple agents can connect to the same MCP servers, sharing access to tools and data sources without duplicating integration code.

MCP vs Function Calling vs Tool Use

This is the most common source of confusion. Here is the simple version:

Function Calling (Tool Use)

Function calling is a model-level feature. When you send a request to an LLM API, you include tool definitions as part of the request. The model can then choose to "call" one of those tools by returning structured JSON with the function name and arguments. Your application is responsible for actually executing the function and returning results to the model.

Function calling is how the model decides to use a tool. It is the intent layer.

MCP

MCP is an integration protocol. It standardizes how tools are discovered, described, invoked, and how results are returned — across models, applications, and tool providers.

MCP is how the tool gets connected, described, and executed. It is the infrastructure layer.

How They Work Together

MCP and function calling are complementary, not competing:

- An MCP client connects to an MCP server and discovers available tools.

- Those tool definitions are passed to the LLM as function calling schemas.

- The LLM uses function calling to decide which tool to invoke.

- The MCP client routes the invocation to the appropriate MCP server.

- The server executes the tool and returns results via MCP.

- Results are passed back to the LLM.

When to Use What

| Scenario | Recommended Approach |

|---|---|

| Prototype with 2-3 simple functions | Function calling directly |

| Production app with many integrations | MCP servers |

| Sharing tools across multiple projects | MCP servers |

| Need to switch between AI providers | MCP servers |

| Building a reusable tool for the community | MCP server |

| Quick one-off script | Function calling directly |

MCP vs Google A2A

It is also worth noting Google's Agent-to-Agent (A2A) protocol, which emerged in 2025. While MCP handles agent-to-tool communication, A2A handles agent-to-agent communication. They operate at different layers and are designed to be used together in complex multi-agent systems.

Security Considerations

MCP's power comes from giving AI systems access to real tools and real data. That power creates real security risks. This section covers what you need to know.

The Core Challenge

In traditional software, security decisions are made by deterministic code. In MCP architectures, a non-deterministic LLM sits at the center of security-critical decisions — deciding which tools to call, with what arguments, and how to interpret results. This fundamentally changes the threat model.

Key Risks

Prompt Injection — Malicious content in user input, tool responses, or resource data can manipulate the LLM into calling tools it should not, with arguments the user did not intend. An attacker who controls any data flowing through the system can potentially hijack tool execution.

Tool Poisoning — Malicious MCP servers or compromised tool descriptions can cause agents to invoke compromised tools. If a tool's metadata says "read a file" but the implementation exfiltrates data, the LLM has no way to detect the mismatch.

Over-Permissioned Tools — MCP servers that provide broad write access (e.g., arbitrary SQL execution, unrestricted file system access) create unnecessary attack surface. A prompt injection that reaches a fully-permissioned database tool can cause significant damage.

Token and Credential Exposure — MCP servers often store authentication tokens for connected services. A compromised server becomes a single point of access to multiple downstream systems.

Supply Chain Attacks — The growing ecosystem of community MCP servers creates supply chain risk. In 2025, a compromised npm package used as an MCP server dependency silently BCC'd every outgoing email to attackers.

Best Practices

-

Principle of least privilege — Give each MCP server only the permissions it needs. A read-only database tool should not have write access. A file reader should be scoped to specific directories.

-

Human-in-the-loop for destructive actions — Require user confirmation before any MCP tool that modifies data, sends messages, or performs irreversible actions.

-

Server allowlisting — Only connect to MCP servers you have reviewed and trust. Use cryptographic verification when available.

-

Input validation — Validate all inputs at the MCP server level before executing any operation. Never trust that the LLM has sanitized inputs.

-

Transport security — Use TLS for all remote MCP connections. For local servers, ensure proper process isolation.

-

Audit logging — Log all tool invocations with full arguments and results. This is essential for detecting misuse and debugging security incidents.

-

Dependency scanning — Run SCA (Software Composition Analysis) on MCP server dependencies, just as you would for any production code.

-

Sandboxing — Run MCP servers in isolated environments (containers, VMs) where possible, limiting the blast radius of any compromise.

The Future of MCP

MCP is evolving rapidly. Here is what the 2026 roadmap looks like:

Official Registry

A community-driven registry for discovering and publishing MCP servers is in development. Think npm for MCP servers — searchable, versioned, and with security metadata. This will make it dramatically easier to find and trust MCP servers.

Improved Authentication

The current authentication story for MCP is basic. The specification is moving toward OAuth 2.1-based authentication with proper scope management, making remote MCP deployments more practical for enterprise use.

Async and Streaming

The draft specification includes support for long-running async operations and streaming results. This is critical for tools that take time — running complex database queries, generating reports, or processing files.

Server Identity and Trust

Cryptographic server identity verification is coming, allowing clients to verify they are connecting to genuine, unmodified MCP servers. This directly addresses supply chain security concerns.

Stateless Mode

Current MCP connections are stateful — losing the connection means losing context. Stateless mode will allow servers to operate without maintaining session state, making horizontal scaling and serverless deployments straightforward.

Getting Started with MCP Today

If you are new to MCP, here is the practical path forward:

-

Try existing servers first. Install Claude Desktop or an MCP-compatible IDE and connect a pre-built server (filesystem, GitHub, or Postgres). Experience MCP as a user before building.

-

Build a simple server. Use the TypeScript or Python examples above, or follow our step-by-step tutorial on building a custom MCP server for Claude Code. Start with a single tool that does something useful for your workflow.

-

Read the specification. The official docs at modelcontextprotocol.io are well-written and comprehensive.

-

Join the community. The MCP GitHub repository and Discord are active with developers sharing servers, patterns, and solutions.

-

Think about security from day one. Apply least privilege, validate inputs, and audit tool usage from the start. Retrofitting security is always harder.

MCP has crossed the threshold from experimental protocol to industry standard. Whether you are building AI-powered developer tools, enterprise automation, or the next generation of AI assistants — MCP is the integration layer you will be building on. To understand how MCP fits into the broader agentic AI landscape, read our guide on AI agents vs AI assistants and explore how vibe coding tools are leveraging MCP for AI-powered app building.

Effloow builds and documents AI-powered workflows. Follow our experiments at effloow.com.

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.