OpenClaw: Self-Hosted AI Gateway for WhatsApp, Telegram & Discord

Why OpenClaw Has 250,000 GitHub Stars in Four Months

In late January 2026, a project called OpenClaw crossed 100,000 GitHub stars in under two weeks. By March 3, 2026, it had surpassed React's decade-long record of 243,000 stars, claiming the title of the fastest organic growth in GitHub's history. Today it sits north of 250,000 stars and shows no signs of slowing.

That number is remarkable because OpenClaw isn't a new programming language, a framework powering billions of apps, or a cloud platform. It's a personal AI gateway — a self-hosted bridge between your messaging apps and whatever LLM you choose to run. One Node.js process, running locally or on a $4/month VPS, that makes AI available anywhere you already communicate.

The velocity of OpenClaw's growth tells you something important about where developers' priorities have shifted in 2026. After years of AI tools that require subscriptions, demand cloud accounts, and quietly train on your data, a tool that gives you complete local control struck a nerve.

This guide covers everything you need to set OpenClaw up, connect your first channel, install skills, and understand its architecture — including the important context around Anthropic's April 2026 billing policy change that every potential user should know.

What OpenClaw Actually Is

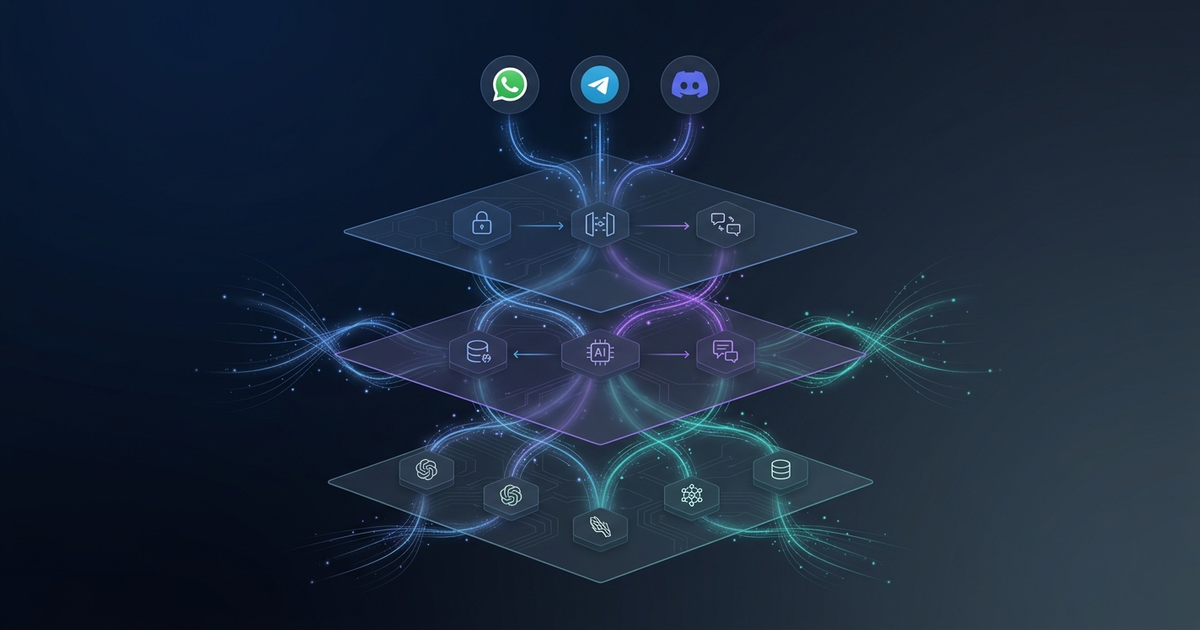

OpenClaw is a single long-running Node.js process — the Gateway — that acts as a control plane for AI sessions across messaging platforms. You run it on your own machine, a Raspberry Pi, a VPS, or dedicated hardware (the ClawBox ships with OpenClaw pre-installed). From that single process, you can message your AI assistant from WhatsApp, Telegram, Slack, Discord, Signal, iMessage, Matrix, Microsoft Teams, Google Chat, Zalo, and more — all routed through the same session context.

The design philosophy is gateway-first: the messaging infrastructure is the primary product, and the AI agent runs inside it. This makes OpenClaw fundamentally different from Hermes Agent, which is agent-first (the agent is the primary product, and messaging is a secondary concern). Neither approach is strictly better — they optimize for different use cases.

Core Concepts

Gateway — The long-running process. Manages sessions, channel routing, tool dispatch, and the heartbeat scheduler. Operates as a WebSocket server on port 18789 by default.

Workspace — A directory that defines the agent's identity and capabilities. Contains AGENTS.md (behavior instructions), SOUL.md (personality), and optionally TOOLS.md (tool declarations).

Skills — Markdown files with YAML frontmatter that teach the agent specialized behaviors. Skills live in ~/.openclaw/skills/ (global) or <project>/skills/ (workspace-scoped). At runtime, only skills relevant to the current turn are injected into the prompt — preventing context bloat.

Channels — Connectors to messaging platforms. Each channel is a plugin that handles protocol-specific authentication and message routing.

ClawHub — The community marketplace. As of April 2026, it hosts over 5,700 community-contributed skills covering email management, browser automation, code review, calendar management, and hundreds of domain-specific tasks.

Supported Platforms

OpenClaw supports 25+ messaging platforms out of the box:

| Platform | Channel Type | Notes |

|---|---|---|

| Built-in | Requires WhatsApp Web bridge or Business API | |

| Telegram | Built-in | Bot token setup, richest feature support |

| Discord | Built-in | Bot or DM mode, server slash commands |

| Signal | Built-in | Via signal-cli bridge |

| Slack | Built-in | Workspace app or DM |

| iMessage | Built-in (macOS) | macOS only via BlueBubbles bridge |

| Matrix | Built-in | Works with Element and other Matrix clients |

| Microsoft Teams | Built-in | Enterprise bot registration required |

| Google Chat | Built-in | Google Workspace account required |

| Voice (macOS/iOS/Android) | Built-in | Wake word activation supported |

| CLI / Web Canvas | Built-in | Browser-based Live Canvas for visual output |

Installation: Three Paths

Path 1: One-Line Install (Recommended for Linux/macOS)

curl -fsSL https://get.openclaw.ai | bash

This installs Node.js 24 (if not present), downloads the OpenClaw binary, and drops you into the interactive onboarding wizard. The wizard walks you through gateway configuration, LLM provider selection, and your first channel connection.

Prerequisites: Node 24 (recommended) or Node 22 LTS (22.14+), an API key from your chosen LLM provider, 5 minutes.

Path 2: Source Install

git clone https://github.com/openclaw/openclaw.git

cd openclaw

pnpm install

pnpm openclaw setup

Source installs are useful when you need to audit the code (strongly recommended before connecting to work accounts — see the security section below), run a patched fork, or contribute upstream.

Path 3: ClawBox Hardware

ClawBox ships with OpenClaw pre-installed. Plug in power and ethernet, open the OpenClaw mobile app, scan the QR code on the device, and follow the wizard. No terminal required. Pricing for ClawBox hardware starts around $149 for the base model.

Configuration Walkthrough

After installation, your config lives at ~/.openclaw/config.yaml. A minimal working configuration looks like this:

gateway:

mode: local

port: 18789

bind: loopback # IMPORTANT: loopback for local, 0.0.0.0 for VPS behind reverse proxy

auth:

mode: token # Use token auth when binding to a non-loopback address

agents:

defaults:

workspace: ~/openclaw-workspace

model: anthropic/claude-sonnet-4-6 # or openai/gpt-4o, google/gemini-3-pro, etc.

provider_key: ${ANTHROPIC_API_KEY}

Security note: If you bind to 0.0.0.0 — required when running on a VPS — enable token authentication and place OpenClaw behind a reverse proxy (nginx or Caddy) with TLS. The Canvas Host component defaults to binding 0.0.0.0, which means any device on your local network can reach the web interface without authentication. Always set auth.mode: token in production.

Setting Up Your Workspace

mkdir ~/openclaw-workspace && cd ~/openclaw-workspace

# AGENTS.md: behavior instructions (equivalent to a system prompt)

cat > AGENTS.md << 'EOF'

You are my personal technical assistant. You have access to my local filesystem,

can run shell commands, and can search the web. Always confirm before destructive actions.

Respond concisely unless I ask for detail.

EOF

# Start the gateway

openclaw start

Connecting Telegram (Quickest Channel)

Telegram is the fastest channel to configure and has the richest feature support in OpenClaw:

- Open Telegram, search for

@BotFather, and run/newbot - Copy the bot token (looks like

7812345678:ABC-...) - In your OpenClaw config, add:

channels:

telegram:

enabled: true

token: ${TELEGRAM_BOT_TOKEN}

allowed_users:

- your_telegram_user_id # Find this via @userinfobot

- Restart the gateway:

openclaw restart - Send your bot a message — OpenClaw will respond through the configured model.

Skills: The Composability Layer

Skills are what make OpenClaw extensible beyond a basic chat interface. A skill is a directory containing at minimum a SKILL.md file:

---

name: web-search

version: 1.2.0

tools:

- search_web

- fetch_url

triggers:

- keywords: [search, find, look up, what is]

---

# Web Search Skill

When the user asks you to search for something, use the `search_web` tool with

their query. Summarize results in 3-5 bullet points. Include the source URL

for each fact you cite.

## Tool: search_web

Params: query (string), num_results (int, default 5)

Returns: Array of { title, url, snippet }

OpenClaw's runtime selectively injects skills based on keyword matching and semantic relevance — it does not inject every installed skill into every prompt. This keeps context lean and model performance high.

Installing from ClawHub

openclaw skill install calendar-manager # Interactive calendar management

openclaw skill install git-reviewer # PR review from CLI

openclaw skill install web-search # Web browsing via Brave/Serper

openclaw skill install file-manager # Local filesystem operations

openclaw skill list # Show all installed skills

ClawHub's 5,700+ community skills cover a wide range of use cases. Before installing any community skill, review its SKILL.md for tool declarations — a skill that requests exec_shell and read_file has significant access to your system.

LLM Provider Options

OpenClaw routes to any OpenAI-compatible API endpoint. Provider configuration goes in your config:

agents:

defaults:

model: anthropic/claude-sonnet-4-6

provider_key: ${ANTHROPIC_API_KEY}

# Or switch to a local model:

# model: ollama/llama4-scout

# provider_url: http://localhost:11434/v1

Supported out of the box: Anthropic Claude, OpenAI GPT series, Google Gemini, Mistral, Meta Llama (via Together/Groq/Fireworks), and any local model via Ollama. For zero-cost inference, connecting to a local Ollama instance eliminates per-token API costs entirely — though quality trades off versus frontier models.

The Anthropic Billing Change You Need to Know

In April 2026, Anthropic made a significant policy change that directly affects OpenClaw users who were routing Claude requests through their Claude Pro/Max subscription via OAuth.

What happened: Prior to April 4, 2026, some OpenClaw users authenticated using their Claude.ai subscription OAuth token instead of an API key. This let them use Claude through OpenClaw without paying per-token API costs. Anthropic ended this on April 4, citing that autonomous agent workloads placed "outsized strain" on infrastructure designed for interactive use.

What this means for you:

- If you use Claude with OpenClaw, you need a paid Anthropic API key (

ANTHROPIC_API_KEY) - OAuth-based subscription passthrough no longer works for third-party tools

- Anthropic's pricing for Claude Sonnet 4.6 is $3/$15 per million input/output tokens

What this doesn't mean:

- You can still use OpenClaw freely — the software itself is Apache 2.0

- You can still connect Claude via API key (most users were already doing this)

- Other providers (OpenAI, Gemini, Ollama, Mistral) are completely unaffected

For most power users, the switch to API key was already the recommended approach. The change primarily affected users who were deliberately avoiding per-token costs via subscription OAuth — a use pattern that was always a gray area.

Security Considerations

OpenClaw's rapid growth brought significant security scrutiny. The community has identified two categories of concern worth understanding before deploying.

Third-Party Skills Risk

Community skills request tool access as part of their SKILL.md declaration. A skill that requires exec_shell, write_file, or fetch_url has real access to your system. The rule: if you haven't read the source code, don't install it. This is especially true for skills that request shell execution.

Mitigations:

- Use workspace-scoped skills instead of global installs for untrusted sources

- Review every

tools:declaration inSKILL.mdbefore installing - Run OpenClaw in a container or VM for high-security environments

Network Exposure

OpenClaw's Canvas Host (the web interface) defaults to binding 0.0.0.0. On a home network, this means any device on your network can access the interface without authentication.

# Explicitly restrict Canvas binding

canvas:

bind: "127.0.0.1" # Loopback only

port: 18790

If you need remote access, use a VPN or SSH tunnel rather than exposing Canvas to the public internet.

OpenClaw vs. Hermes Agent: When to Choose Which

- Superior multi-platform messaging support (25+ channels vs Hermes' 6)

- Voice wake word + Live Canvas browser interface

- Simpler initial setup — onboarding wizard handles most configuration

- 5,700+ community skills on ClawHub marketplace

- Provider-agnostic: Claude, GPT, Gemini, or local Ollama models

- Heartbeat scheduler for autonomous background tasks

- Skills are static files — no self-improvement or autonomous skill creation

- Less deep LLM provider integration compared to Hermes (Hermes supports 200+ via OpenRouter)

- Community skills quality is inconsistent — requires manual review

- Memory is session-scoped by default; long-term memory requires additional setup

- Node.js runtime; higher baseline memory usage than Hermes (Rust-based)

The clearest decision rule: if you need a persistent assistant you can message from multiple platforms through a unified interface, OpenClaw is the more direct solution. If you need an agent that learns and improves from session to session with minimal manual skill maintenance, Hermes Agent has the clearer roadmap for that use case.

Realistic Cost Breakdown

Running OpenClaw involves two cost categories that are easy to conflate:

| Component | Option | Monthly Cost |

|---|---|---|

| Infrastructure | Local machine (already running) | $0 |

| Budget VPS (Hetzner, Contabo) | $4–7 | |

| Managed OpenClaw hosting (Hostinger) | $7–24 | |

| ClawBox hardware (amortized) | ~$12 (over 12 months) | |

| LLM API | Claude Sonnet 4.6 (light use, ~2M tokens/mo) | ~$6 |

| Claude Sonnet 4.6 (active use, ~20M tokens/mo) | ~$60 | |

| Local Ollama (Llama 4 Scout or similar) | $0 | |

| OpenAI GPT-4o (moderate use) | ~$20–40 |

For a developer using OpenClaw as a daily assistant across Telegram and Slack, with Claude Sonnet 4.6 and a budget VPS, expect $15–30/month total. If you run local Ollama models, infrastructure costs alone apply.

FAQ

Q: Does OpenClaw work on Windows?

The gateway runs on Windows via WSL2. Native Windows support is in progress but not yet recommended for production use. macOS and Linux (Ubuntu/Debian) are the primary supported targets.

Q: Can I run multiple AI models simultaneously?

Yes. OpenClaw supports per-workspace model configuration, so you can have one workspace using Claude Sonnet 4.6 (for complex tasks) and another using a local Ollama model (for lightweight queries). Channel routing can direct specific conversations to specific workspaces.

Q: How does OpenClaw handle conversation memory?

By default, OpenClaw maintains session-scoped memory — context within a conversation thread is preserved, but doesn't carry across sessions without configuration. Long-term memory requires either a Vector Memory skill (available on ClawHub) or manual memory file management in your workspace directory.

Q: Is OpenClaw affected by Anthropic's Terms of Service?

OpenClaw itself is Apache 2.0 licensed and independent of Anthropic. Using Claude models through the Anthropic API (with an API key) is fully compliant with Anthropic's terms. The April 2026 policy change only affected users routing requests through Claude.ai OAuth tokens — API key usage was and remains supported.

Q: What happens when my VPS goes offline?

Sessions are queued in the Gateway's local SQLite database. When the gateway comes back online, queued messages are processed in order. For production deployments, consider using a process manager (PM2 or systemd) with automatic restart.

Key Takeaways

-

OpenClaw is infrastructure, not just an app. The Gateway process is a persistent service that manages AI sessions across every platform you communicate on. Think of it as a personal AI router.

-

The Anthropic billing change matters, but doesn't break anything. You need an API key for Claude — the same as any other OpenClaw LLM provider. OpenClaw's value proposition is platform routing and skill composability, not subscription arbitrage.

-

Security review skills before installing them. The 5,700+ ClawHub skills vary wildly in quality and the permissions they request. A five-minute source review on each new skill is not optional for security-conscious deployments.

-

OpenClaw's strength is breadth; Hermes Agent's strength is depth. If you want one AI assistant accessible from every messaging app you use, OpenClaw wins on coverage. If you want an agent that refines itself over time, Hermes Agent's self-improvement loop is the differentiator.

-

Local Ollama models eliminate API costs entirely. For privacy-sensitive workflows or budget constraints, running Llama 4 Scout or a similar model locally via Ollama makes OpenClaw essentially free to operate after infrastructure costs.

OpenClaw is the most capable self-hosted personal AI gateway available in 2026 — if your goal is a single AI assistant that works wherever you already communicate. Its 250,000-star growth reflects a genuine developer need for local-first AI control, not hype. Set up is straightforward, the skill ecosystem is maturing rapidly, and the architecture is clean enough to extend. Just review community skills before installing them, use API keys not OAuth tokens for Claude, and secure your Canvas interface on public networks.

Prefer a deep-dive walkthrough? Watch the full video on YouTube.

Need content like this

for your blog?

We run AI-powered technical blogs. Start with a free 3-article pilot.