Fine-Tune LLMs with LoRA and QLoRA: 2026 Guide

Learn to fine-tune LLMs with LoRA and QLoRA in 2026. VRAM requirements, dataset prep, Unsloth/Axolotl setup, hyperparameters, and evaluation.

Two years ago, fine-tuning a large language model required a rack of A100s, a machine learning team, and a five-figure cloud bill. In 2026, a single RTX 4070 Ti is enough to specialize a 7B model on your domain data — in an afternoon.

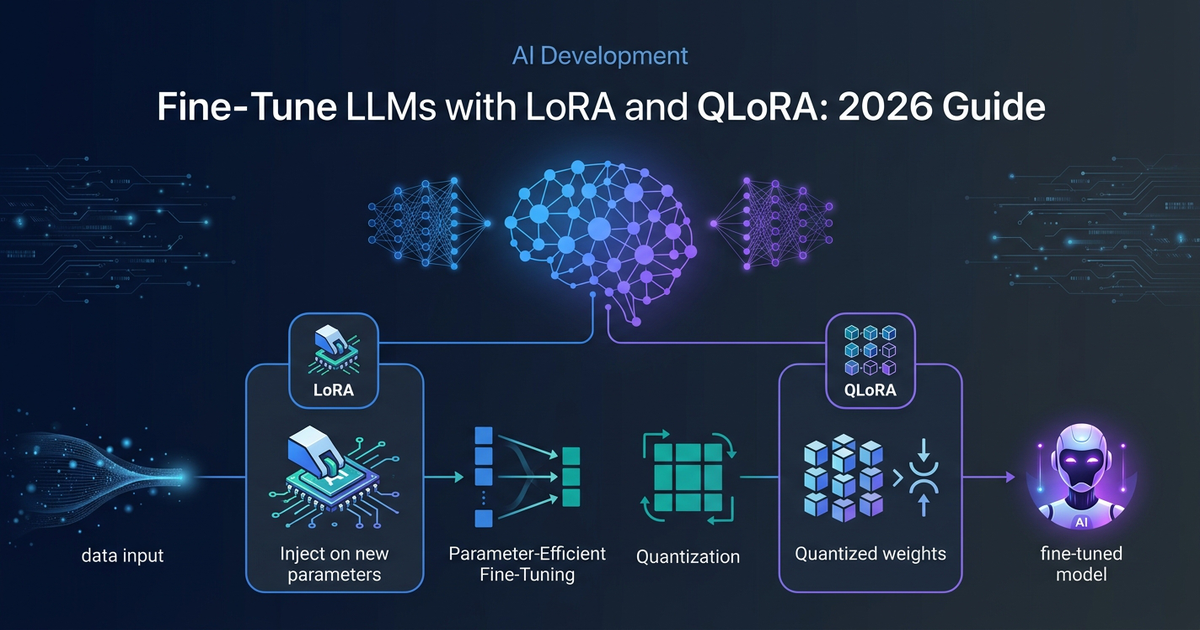

That shift happened because of two techniques: LoRA (Low-Rank Adaptation) and QLoRA (Quantized LoRA). Together they compress the problem of "update 7 billion parameters" into "update 0.1% of them, and compress the rest to 4-bit integers." The math is elegant. The results are surprisingly close to full fine-tuning. And the toolchain in 2026 has matured to the point where a YAML config file is often all you need.

This guide explains how both techniques work, what hardware you actually need, how to prepare your dataset, which tool to reach for, and how to know if your fine-tune actually worked.

Why Fine-Tune at All?

Prompt engineering and RAG solve many problems — retrieval handles factual grounding, and system prompts can shape behavior. But they hit walls:

- Style consistency: You want every output to sound like your brand's voice, not a generic assistant.

- Format adherence: Legal documents, structured JSON, domain-specific schemas — prompts alone are brittle.

- Latency and cost: A fine-tuned 7B model often matches a prompted 70B model on narrow tasks at a fraction of the inference cost.

- Offline capability: A fine-tuned local model works without API calls, which matters for compliance and data privacy.

When your task has clear input/output structure and you can produce 500–5,000 high-quality examples, fine-tuning is the right tool.

How LoRA Works

Full fine-tuning updates all of a model's weights — billions of floating point numbers — which requires storing gradients and optimizer states for every single parameter. At 16-bit precision, a 7B model alone needs roughly 14 GB of VRAM just to hold the weights, and training multiplies that by 3–4x.

LoRA sidesteps this by decomposing weight updates into low-rank matrices. Instead of updating the full weight matrix W (which might be 4096×4096 = 16.7 million parameters), LoRA learns two small matrices A and B where:

ΔW = B × A

Where A has shape (r × d_in) and B has shape (d_out × r), with r being the rank — typically 8 to 64. The original weights W₀ are frozen. During inference, the adapted weight is simply:

W = W₀ + (α/r) × B × A

The scaling factor α/r controls how strongly the adapter influences the output. At rank 16 with a 7B model, you're typically training fewer than 40 million parameters — less than 0.6% of the total. This is why LoRA can reduce trainable parameters by 10,000x while recovering 90–95% of full fine-tuning quality on most tasks.

QLoRA: Taking It Further with Quantization

QLoRA extends LoRA by quantizing the frozen base model weights to 4-bit precision using a format called NF4 (Normal Float 4-bit). NF4 is information-theoretically optimal for normally distributed weights, which is what most neural network layers produce.

The practical result: a 7B model that needs ~14 GB at 16-bit precision fits in 5–6 GB with 4-bit QLoRA. A 70B model drops from ~140 GB to around 46 GB — fitting on a single A100 80 GB.

The trade-off is quality: QLoRA typically achieves 80–90% of full fine-tuning performance, compared to 90–95% for standard LoRA. For most production use cases, that gap is acceptable. For highly specialized tasks requiring maximum quality, LoRA in 16-bit is worth the extra VRAM.

Hardware Requirements in 2026

The practical minimums for common model sizes:

| Model Size | Full FT (16-bit) | LoRA (16-bit) | QLoRA (4-bit) | Consumer GPU |

|---|---|---|---|---|

| 3B–4B | ~48 GB | ~10 GB | ~5 GB | RTX 3060 12GB |

| 7B–8B | ~112 GB | ~16 GB | ~8 GB | RTX 4070 Ti 12GB |

| 13B | ~200 GB | ~28 GB | ~14 GB | RTX 4090 24GB |

| 34B | ~520 GB | ~70 GB | ~24 GB | RTX 4090 + offload |

| 70B | ~1 TB+ | ~140 GB | ~46 GB | A100 80GB |

These numbers assume sequence length ≤512 and batch size 1 with gradient checkpointing. Longer sequences increase VRAM proportionally — doubling to 1024 tokens roughly 1.5×-2× the memory. Apple Silicon (M3 Pro+) can handle 7B QLoRA in CPU+GPU unified memory mode but trains 3–5× slower than a dedicated NVIDIA GPU.

If you don't own the hardware, cloud spot instances are the most cost-efficient path: RunPod, Lambda Labs, and Vast.ai all offer A100 80GB at $1.50–$3/hour. A 7B fine-tune on 5,000 examples typically completes in 2–4 hours.

Dataset Preparation

Your dataset is the most important variable. A fine-tune is only as good as its training examples.

Format

The standard for Supervised Fine-Tuning (SFT) in 2026 is JSONL with a messages array following the ChatML schema:

{"messages": [

{"role": "system", "content": "You are a precise SQL query generator."},

{"role": "user", "content": "Get all users who signed up last month."},

{"role": "assistant", "content": "SELECT * FROM users WHERE created_at >= DATE_TRUNC('month', NOW() - INTERVAL '1 month') AND created_at < DATE_TRUNC('month', NOW());"}

]}

This format works directly with Axolotl, Unsloth, and TRL without transformation. Always apply the target model's chat template — using the wrong special tokens (e.g., Llama vs Mistral vs Qwen) can degrade results significantly.

Loss Masking

Only the assistant's response should contribute to the training loss. The prompt and system message should be masked so the model learns to generate outputs, not to predict its own inputs. All major frameworks apply this by default when you use the messages format.

Dataset Size and Quality

- 500–1,000 examples: Style and format adaptation. Changing tone, output structure, or language register.

- 1,000–5,000 examples: Domain specialization. Teaching the model terminology, processes, and patterns from a specific field.

- 5,000–50,000 examples: Capability addition. Teaching genuinely new knowledge or complex reasoning patterns.

Quality beats quantity. 200 hand-curated examples typically outperform 2,000 scraped and noisy ones. Use a validation split of 10–20% and monitor validation loss — if it diverges from training loss after epoch 1–2, you have overfitting.

The 2026 Fine-Tuning Toolchain

Four frameworks dominate the space. They're not interchangeable — each has a distinct sweet spot.

Unsloth — Fastest on Consumer Hardware

Unsloth is the go-to choice if you're running on a single GPU and speed matters. It provides hand-optimized CUDA kernels for LoRA training that reduce memory usage by up to 74% and increase throughput 2–5× compared to vanilla Hugging Face Transformers.

Benchmark: Llama 3.1 8B on a single A100 40GB — Unsloth completes in 3.2 hours vs Axolotl's 5.8 hours.

from unsloth import FastLanguageModel

import torch

model, tokenizer = FastLanguageModel.from_pretrained(

model_name="unsloth/Meta-Llama-3.1-8B-Instruct",

max_seq_length=2048,

dtype=None, # auto-detect

load_in_4bit=True, # QLoRA mode

)

model = FastLanguageModel.get_peft_model(

model,

r=16,

target_modules=["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj"],

lora_alpha=16,

lora_dropout=0,

bias="none",

use_gradient_checkpointing="unsloth",

random_state=42,

)

Axolotl — YAML-First Pipeline

Axolotl is the choice when you want a battle-tested pipeline configured entirely through YAML. It supports the widest range of training objectives: LoRA, QLoRA, full fine-tuning, DPO, GRPO, ORPO, reward modeling. Versions v0.28.0 and v0.29.0 shipped in February 2026 with active community support.

# axolotl_config.yaml

base_model: meta-llama/Llama-3.1-8B-Instruct

model_type: LlamaForCausalLM

tokenizer_type: AutoTokenizer

load_in_4bit: true

adapter: lora

lora_r: 16

lora_alpha: 16

lora_dropout: 0.05

lora_target_modules:

- q_proj

- v_proj

datasets:

- path: data/train.jsonl

type: chat_template

val_set_size: 0.1

sequence_len: 2048

micro_batch_size: 2

num_epochs: 3

learning_rate: 2e-4

output_dir: ./outputs/llama-3-1-8b-lora

axolotl train axolotl_config.yaml

LlamaFactory — GUI + Code

LlamaFactory (v0.9.4, released December 2025) includes a web UI for dataset management and training monitoring alongside its Python API. It's the best entry point if your team isn't comfortable writing training scripts from scratch. The rebranding from LLaMA-Factory to LlamaFactory in v0.9 aligned with support for non-Llama architectures including Qwen, Mistral, Gemma, and Phi.

Hugging Face TRL — Maximum Flexibility

TRL is the right choice when your training objective goes beyond standard SFT. GRPO, DPO, PPO, RLOO, KTO — if you're doing RLHF or preference optimization, TRL has the most correct implementations. It's not the fastest or the simplest, but it's the most flexible and best-maintained for advanced objectives.

LoRA Hyperparameters: What Actually Matters

Most guides list every hyperparameter. In practice, three settings drive most of the variation:

Rank (r)

- r=8: Simple style or format tasks. Minimum capacity.

- r=16: The recommended default. Works for instruction fine-tuning, style adaptation, and most domain specialization tasks.

- r=32–64: Complex domain shift, multi-task training, or when you have 10,000+ examples. Higher rank = more capacity = higher VRAM.

Don't tune rank obsessively. Start with r=16, get a working baseline, then increase only if validation loss stagnates.

Alpha (α)

Set alpha equal to rank (α=r) or double it (α=2r). The ratio α/r is the effective scaling factor for the adapter output. Microsoft's own LoRA examples use α=2r. The 2026 consensus from Unsloth's documentation: start with α=r=16, which applies a 1.0 scaling factor — straightforward and stable.

Target Modules

Use target_modules="all-linear" as your starting point in 2026. Earlier guidance recommended targeting only q_proj and v_proj, but current benchmarks show that including all linear layers (q, k, v, o, gate, up, down projections) consistently produces better results with minimal VRAM overhead.

DoRA

DoRA (Weight-Decomposed LoRA) decomposes updates into magnitude and direction components, improving convergence on complex tasks. It's available in PEFT and Unsloth with a single flag: use_dora=True. The 2026 Unsloth recommendation: use r=16 with DoRA enabled as your default starting configuration.

Common Mistakes and How to Avoid Them

Wrong chat template: Using Llama-3 tokens to fine-tune a Mistral model silently produces a broken adapter. Always verify the tokenizer's apply_chat_template output matches the model's expected format before training.

Forgetting loss masking: If your framework applies loss to the full input (prompt + response), the model learns to predict its own questions. Always verify that only assistant turns contribute to training loss.

Overfitting on small datasets: On fewer than 500 examples, use 1–2 epochs maximum. Monitor validation loss. The moment validation loss starts rising while training loss continues falling, stop training.

Catastrophic forgetting: Aggressive fine-tuning can degrade the model's general capabilities. If MMLU score drops more than 2–3 points from baseline, reduce learning rate or training epochs, or try a smaller rank.

Not setting a random seed: Reproducibility matters. Always set random_state=42 (or any fixed seed) so you can reproduce training runs.

Evaluating Your Fine-Tuned Model

A fine-tune without evaluation is guesswork. Three complementary approaches:

Task-Specific Metrics

Define a held-out test set of 100–200 examples. Run both the base model and your fine-tuned model on the same inputs. Measure the delta on your target metric — exact match, ROUGE-L for text generation, F1 for classification. The delta is what matters, not the absolute score.

Perplexity

Perplexity measures how surprised your model is by a test corpus. Lower perplexity on your domain data after fine-tuning confirms the model has internalized domain patterns. Importantly, check perplexity on a general corpus too — a rising score there indicates catastrophic forgetting.

General Capability (MMLU)

Run MMLU on both base and fine-tuned model using lm-evaluation-harness (EleutherAI). A drop of more than 2–3 points is a warning sign. A healthy fine-tune should maintain or improve MMLU on domain-adjacent subjects while general performance stays flat.

# Install evaluation harness

pip install lm-eval

# Run MMLU on your fine-tuned model

lm_eval --model hf \

--model_args pretrained=./outputs/my-fine-tuned-model \

--tasks mmlu \

--device cuda:0 \

--batch_size 4

LLM-as-Judge

For open-ended generation tasks, automated metrics miss quality. Have GPT-4 or Claude evaluate outputs on a 1–5 scale for helpfulness, accuracy, and style adherence. This is the most expensive evaluation method but often the most predictive of real-world user satisfaction.

The Full Fine-Tuning Workflow

To summarize the practical sequence for a 7B QLoRA fine-tune:

- Prepare dataset: 1,000+ examples in JSONL ChatML format, 90/10 train/val split

- Choose base model: Llama 3.1 8B Instruct, Qwen3-8B, or Mistral 8B are all strong starting points in 2026

- Configure training: r=16, α=16,

target_modules="all-linear", DoRA enabled, 2–3 epochs, lr=2e-4 - Train with Unsloth: ~2–4 hours on A100, ~6–8 hours on RTX 4090

- Evaluate: Task metrics on held-out set + MMLU delta check

- Merge and export:

model.save_pretrained_merged()to save full weights, or keep adapter-only for lightweight deployment - Serve: GGUF quantization for Ollama or llama.cpp, or vLLM for high-throughput API serving

For production deployments, see our guides on self-hosting LLMs vs cloud APIs and running local models with Ollama.

FAQ

Q: Should I use LoRA or QLoRA?

If you have a 24 GB GPU and are fine-tuning a 7B model, use LoRA in 16-bit — you get 5% better quality with no speed penalty. If you need to fit a larger model on limited VRAM, or if you're running on 12 GB or less, QLoRA is the right choice. The quality gap (90–95% vs 80–90% of full fine-tuning) is acceptable for most production tasks.

Q: How many examples do I need?

500 high-quality examples are enough for style and format adaptation. 1,000–5,000 for domain specialization. Beyond 5,000, you're in diminishing returns territory unless you're adding genuinely new knowledge. Focus on quality: 200 hand-curated examples beat 2,000 scraped ones.

Q: Can I fine-tune on a Mac?

Yes, with Apple Silicon (M3 Pro or M4 Pro minimum). Unsloth supports Apple MPS backend, and llama.cpp with MLX handles QLoRA training. Expect 3–5× slower training than a comparable NVIDIA GPU. A 7B model fine-tune that takes 3 hours on an RTX 4090 takes 10–15 hours on an M3 Max.

Q: Will fine-tuning hurt my model's general capabilities?

It can, if you overtrain or use too high a learning rate. Monitor MMLU scores before and after. A well-executed fine-tune maintains general capability while improving domain performance. The safest parameters: 1–3 epochs, lr=1e-4 to 2e-4, rank 16, gradient checkpointing enabled.

Q: How do I deploy my fine-tuned model?

Two paths: (1) Merge the adapter into the base model weights and export to GGUF format for Ollama/llama.cpp deployment — ideal for single-user or small-team use. (2) Keep the adapter separate and load it on top of the quantized base at inference time via vLLM or Hugging Face TGI — better for serving multiple users or multiple adapters on the same base model.

Key Takeaways

- LoRA reduces trainable parameters to 0.1–1% of the total by learning low-rank weight updates. It recovers 90–95% of full fine-tuning quality.

- QLoRA adds 4-bit quantization of the base model, reducing VRAM requirements by ~75%. Ideal for consumer GPUs. Quality: 80–90% of full fine-tuning.

- Hardware minimums: 7B model with QLoRA fits in 8 GB VRAM. 70B fits in 46 GB on a single A100 with QLoRA.

- Default hyperparameters: r=16, α=16, all-linear target modules, DoRA enabled. Start here and adjust if needed.

- Dataset quality beats quantity: 500 clean examples outperform 5,000 noisy ones for most adaptation tasks.

- Toolchain in 2026: Unsloth for speed on consumer hardware, Axolotl for YAML-driven pipelines, TRL for advanced training objectives.

- Always evaluate: Task-specific metrics + MMLU delta. A fine-tune that doesn't improve your target metric failed, no matter how low the training loss.

LoRA and QLoRA have made LLM fine-tuning genuinely accessible: a $300 GPU, 500 curated examples, and an afternoon are enough to specialize a frontier model on your domain. The toolchain is mature, the documentation is solid, and the quality delta from full fine-tuning is narrow enough for production use. If you have a task with clear structure and enough examples to define it, there is no longer a good reason not to fine-tune.

Prefer a deep-dive walkthrough? Watch the full video on YouTube.

Get weekly AI tool reviews & automation tips

Join our newsletter. No spam, unsubscribe anytime.